On February 24, Microsoft has introduced HoloLens 2 to the world at the Mobile World Congress in Barcelona. And boy, what a launch it was! As with the launch of the first HoloLens four years earlier, this day will be remembered as one of the most important days in the history of computing – regardless whether Microsoft will be successful in their endeavors or not.

This analysis is not a quick first impression. It is based on 12+ hours of research and 3+ years of experience developing Mixed Reality (HoloLens, VR, and since last week, Magic Leap) applications, mostly for the enterprise (manufacturing, maintenance, repairs, health, aviation and so on). This post is loooong. And detailed. And I had to split it into two parts because I’ve got too much to say about the whole announcement apart from just examining the heck out of the device itself.

Note: I’ve not been lucky enough to see HoloLens 2 in person yet, so please be aware of that while reading. This post is based on:

And many others, including tons of tweets, conversations with fellow Mixed Reality enthusiasts, and even some answers to my continuously nagging questions from Microsoft. Having said that, any mistakes in this post are my fault alone. If you find one, please let me know!

Microsoft’s Goals

With Hololens 2, Microsoft is focusing exclusively on the enterprise, aiming this device squarely at first line workers and other enterprise scenarios. Alex Kipman has stated that with the next generation of HoloLens Microsoft has three focus areas: more immersion, more comfort, more value right out of the box. Let’s look at these in details.

More Immersion

An Increased Field of View

By far the number one complaint against HoloLens was the limited field of view. People have described looking at holograms as if you were seeing them through a mail slot. In practice, after showing HoloLens to hundreds of people, I found that most people could get used to the limited field of view after about 5 minutes. However, most demos don’t last 5 minutes, and this gave HoloLens a worse reputation than it deserved. I’m not saying that the field of view wasn’t a problem, but it wasn’t as much of a limiting factor as the media and most first-hand experiences made it out to be. Clever application design and taking advantage of spatial sound could mitigate most of the issues and made living with holograms not merely a bearable, but a useful and even pleasant experience.

A larger field of view is of course a very welcome change. And Microsoft has increased the diagonal field of view from 34 to 52 degrees. Most of the growth is vertical, meaning the picture is no longer 16:9, but has an aspect ratio of 3:2. This should take care of the “mail slot” comments. The pixel count and the viewable area has more than doubled. Luckily, the HoloLens ditched Intel’s processors (Mr. Kipman called this decision a “no-brainer” due to Intel’s shortcomings in the power efficiency area). HoloLens 2 will sport a decent Qualcomm Snapdragon 850, which should have no problem keeping up with the increased demands on the GPU.

New Display Technology

For the display, Microsoft has introduced a novel approach by combining two previously existing technologies: MEMS displays and waveguides. Waveguides have been used in the previous HoloLens, as well as with Magic Leap One, and a lot of other AR headsets. However, the images projected into the waveguides are now created by high precision laser beams – reflected form a set of mirrors vibrating at a crazy 54000 times a second. To design the entire optics system, Microsoft has used the vast computing capacity of its Azure cloud to simulate the path of the different colored laser beams through the waveguide almost at the photon level. And I can’t even fathom the incredibly intricate manufacturing process that’s needed for such precision.

The result is a picture that retains the high pixel density of the original HoloLens, while more than doubling the viewable area. It is also much brighter, capable of 500 nits, and judging from some of Microsoft’s materials, should be suitable for outdoor usage. (Bright sun causes the image of the current HoloLens to be completely washed out).

Microsoft is also ditching the 3-layer waveguide arrangement they used in HoloLens 1 (one for red, green and blue), and replacing it with a dual waveguide configuration (one for red and green, and one for green and blue). This should help with the color inconsistencies somewhat, but I’ll have to see what it means in practice.

The unknown factor of this new optics system is of course the image quality. Waveguides are prone to have image quality issues, such as colorization. We have to see how much worse things get with the laser projection system. Most reviewers have not mentioned image quality at all (this is different from rendering quality, which is clearly better). This indicates to me that it is more or less in par with the first HoloLens or Magic Leap – any striking differences would have been talked about. However, image quality is much less important in an enterprise scenario.

But there’s another reason why a larger field of view is important for HoloLens 2. And that is the feature that pretty much stole the already super strong show: direct hand interaction.

Direct Hand Interaction

Since the first ever HoloLens demo, people wanted to touch the holograms, to interact with them the way they interact with real objects – with their hands. Push buttons, grab and move objects – or just poke them to see if they are real.

While it was possible to detect the hands of a user (as long as it was in one of two poses), direct interaction never caught up with HoloLens. The reason: the field of view was so limited, once you got close enough to touch a hologram, you could only see a very limited part of it. Because of the extreme clipping, most designers kept the holograms at the recommended 1.5 – 5 meter length (5-16 feet). This distance is of course, out of reach, so remote manipulation (using the head’s gaze as a cursor and the hand as the mouse button) was the preferred interaction model with HoloLens.

We got a taste of direct manipulation with Magic Leap (especially the Tónandi experience), which has a larger field of view than the original HoloLens. But most of the applications are still using the controller instead of direct manipulation.

But HoloLens 2 does not come with a controller, and when asked, Mr. Kipman has mostly evaded the question. So, direct hand manipulation is the number one way to get in touch with the holograms. You can poke them, resize them, rotate them, move them. You can press buttons, turn dials. You can even play a holographic piano, and as we saw in the incredibly fun and energetic demo of Julia Schwarz, the hand tracking is sensitive and precise enough to understand what cord you pressed! HoloLens 2’s hand tracking recognizes 25 points per hand, which is more than the 8 points per hand on the Magic Leap. HoloLens 2’s hand tracking also seems super robust based on the videos.

This increased hand tracking quality is made possible by the new depth sensor that allows unprecedented precision and power efficiency. It has excellent short and long-range capabilities with super low noise that not only helps with hand tracking, but also can create a much better depth map of the environment and can even be used for semantic understanding of the device’s surroundings. (The new depth sensor is also being sold as a separate “Azure Kinect DK” device).

The Bentley demo Microsoft is showing off at the Mobile World Congress has blown away the mind of many who were lucky enough to try it. The demo involves two users, who can both pick and manipulate virtual objects, and see what the other user has in their hands. Hand tracking is so precise, that during the demo, participants are asked to give the objects to the other person and take their objects instead! All of this works naturally, without any strange gestures or commands to learn. Just as if you were exchanging real objects.

I’m super excited to see for myself how the direct hand interaction works. But from the demos and videos (and I watched a lot of them), it seems like Microsoft has got it right, and with a well-designed interface that follows real world objects (dare I say skeuomorphic?), interaction will be a breeze.

Instinctual Interaction

Of course, there are other interaction types on HoloLens 2. Voice commanding (which works offline), dictation (you’ll need an online connection for this), gaze (a pointer that follows your head), Bluetooth keyboard and mouse are all at the disposal of the designer. But so is eye tracking, which has been shown off to understand that you are approaching the bottom of a web page and will make it scroll up all by itself.

Microsoft calls all these interaction types “Instinctual Interaction”, because you instinctively know how to use a button, turn a dial, grab and move an object, dictate, etc. I have a feeling this is just a re-branding of the term “NUI” (Natural User Interface), which is based on the same principles – bringing what you’ve learnt in the real world to human-computer interactions.

Eye Tracking

Speaking of eye tracking, it is handled by two tiny cameras close to the nose, at the bottom of the glasses. It remains to be seen how precise and useful these are for interaction, but they also have two other purposes. They automatically calibrate the HoloLens according to your IPD (inter-pupillary distance) – this is key for proper depth sensing and reducing eye strain. The eye tracking cameras also work as a retina scanner to securely identify users the moment they put on the headset. If you’ve ever typed a password in a VR or AR headset, you’ll welcome the relief of instant login.

Microsoft has not implemented foveated rendering in Hololens 2. Foveated rendering in short is the technique that only creates high definition visuals around the point where you’re looking at – and keeps the visuals blurry outside the small area you’re focusing on, where your eyes are not sensitive to details anyway. Foveated rendering makes the job of the GPU easier while – in theory – keeping the perceived image quality the same. Technically, they could add this later as an upgrade. Eye tracking is available, and the Snapdragon 850 supports foveated rendering.

More Comfort

Microsoft’s aim with the new HoloLens is to make it a tool for first-line workers. Office jobs already give people a ton of computing power in the form of PCs, laptops, mice and keyboards. However, in the field, people need both of their hands to fix an airplane engine, install electricity or even perform surgery on a patient. They work in 3 dimensions, on 3 dimensional problems, instead of the 2D documents and tables. They need access to the right information, at the right time, and at the right point in space. And they need to use their devices throughout the day, even if just for short intervals at a time.

One of the most striking things when just looking at the new HoloLens vs the old is how the design of the headset has been changed. The heavy electronics has been split into two – with the computing part and the battery being moved to the back of the head. This puts the center of mass right above your spine instead of on your forehead, significantly reducing muscle strain in the neck. The headset has been also cushioned in a way that is super comfortable, and you can wear it for a prolonged time. All of these make HoloLens 2 feel significantly lighter and more comfortable than HoloLens 1 did, despite being only 13 grams (0.03 pounds) lighter.

Of course, all computers give out heat, and a state-of-the art AR headset is no different. However, judging from the heat dissipation mastery we’ve seen on HoloLens 1, and the extra cooling areas available for the unit at the back of the head, I don’t expect this to be a problem.

Speaking of the computing + battery unit: some people even call it a bun. That’s a fitting name which made me wonder how it would fare for users who have an actual bun at that point of their head. It will also negatively impact laying back on a high-back chair as the “bun” will not allow your head to lay on the headrest. Of course, this is more of an issue for the knowledge worker than the first line worker Microsoft is aiming the new headset at.

Fitting

Putting on HoloLens 2 is simple – just pull it over your head like you would with a baseball hat and tighten the dial at the back. I love Magic Leap’s solution for the same problem, but Microsoft’s approach is more practical and probably more solid when you are moving your head to look inside and around equipment or look up at a car on a lift. It also seems like HoloLens 2 is a one size fits all device, which is again a welcome feature for workplaces that have more users per HoloLens. However, you do have to calibrate the eye tracking for a new user, which takes about 30 seconds. Ah, and the big thing: unlike with Magic Leap, you can fit your actual prescription glasses under the HoloLens.

Flip It Up!

Another striking new feature of the headset (again, super useful for first-line workers) is that the visor at the front can be flipped up. This allows unobstructed view of the environment as well as eye contact when talking to peers. HoloLens 1 also allowed the user to have eye contact with people around them, but it did require extra effort on the ones not wearing the HoloLens, much like a pair of (moderately dark) sunglasses would.

Customization

Microsoft is also launching the HoloLens Customization Program that allows partners to tweak the hardware itself to fit their environmental needs. The first such partner is Trimble, who have created a hard hat solution that only keeps the front and back parts of the HoloLens, and completely redesigns the rest – including the flip-up mechanism, the fitting mechanism and even the way the wires travel.

Peripheral Vision

In a factory or construction environment, it is very important not to have your peripheral vision constrained. A thick frame around the glasses, such as Magic Leap’s have proven to be a showstopper for some of my clients just for this reason. You need to be able to see an approaching cart, you must see where you’re stepping – no matter how magical or useful the holographic world is, these safety rules are way more important.

With HoloLens 2, your vision of the real world is not constrained, especially with the flip-up feature. This may look like a small box to tick for Microsoft, but it shows their understanding of the target environment and commitment to truly bring a useful device to market.

Value Right Out of the Box

One of the big problems with HoloLens was that to get some actual value from it, companies had to hire expensive consultants and developers, and embark on a many-month journey just to get to a prototype. A prototype they usually couldn’t get actual value out of, apart from showing it at trade shows and creating cool PR videos. While creating dozens of such demos paid the bill and has been very educational for me personally, it was very rare that a company went beyond the prototype phase. Even a Pilot where they would be able to measure the ROI of a small, but actually working system rarely happened. This is not just my experience, it is what I’ve heard from other consultants in the space as well. The real success stories, with wide HoloLens deployments that generate value are rare. This is natural as the technology is so new, and a lot of the exploratory prototypes ended up with “This has great potential, but let’s get back to it in a few years, when the right device is available”.

For Microsoft, the problem with this was that they couldn’t sell devices and hasten the MR future they envisioned. Even the largest enterprises only bought a few HoloLenses, created a prototype or a demo for a trade show, but never put the – otherwise great – ideas into production, due to the shortcomings of the original HoloLens. There were some exceptions of course, but not enough to really move the needle.

Enter HoloLens 2, with a clear and ruthless focus on increased comfort and usability for first-line workers. Every decision Kipman and his team made designing HoloLens 2 screams “enterprise” – and it is an excellent strategy. But something was still missing. Why would an average enterprise buy a HoloLens 2 if they had to go and get vendors to develop applications that they can actually use? What good is an amazing head-worn computer without the software?

Microsoft has been talking to their customers and was watching what their partners were building. They have identified a few common applications and created turnkey solutions that aim to be killer apps.

“Remote Assist” that a worker can use to get help from his/her more experienced peer through a secure video call, and the ability to place drawings, arrows and even documents in the real world.

“Guides” can help you learn how to perform tasks, such as fixing an engine through holographic instructions that guide you through the steps by understanding where the engine is, and pinpointing areas of interest.

And “Layout” to plan a space, such as a factory floor, a museum or an operating room in both VR and AR.

Microsoft hopes that these typical first party apps (I’ve created a few prototypes like these myself) will help answer the question of what the actual value of a HoloLens is. I still feel that the real killer app is missing, or maybe being secretly developed – but for the right customer, even these apps can justify the purchase of HoloLens, and are most certainly cheaper than hiring a team of professionals to develop them from scratch.

Summary

So, has Microsoft accomplished what they set out to do and created the perfect enterprise AR headset? I believe so. They are ticking all the boxes, and they are the right boxes at the current state of the industry. Other companies will no doubt follow, with more specialized, cheaper, lighter headsets that may be better for a specific task. But it is clear that when it comes to Mixed Reality and business use, Microsoft is ahead of the pack with a comfortable and very capable headset that has the ecosystem behind it.

Speaking of the ecosystem… Microsoft’s announcement wasn’t just about the enterprise. Mr. Kipman has stated multiple times that they are thinking hard about the consumer market. They need a more immersive display, better battery, a lighter, better design, and a $1000 price to get there. And he said that Microsoft is working towards that goal. And some of the – seemingly enterprise-oriented – services announced today have serious consumer implications. Azure Remote Rendering allows for a less powerful headset (see also: weight, comfort, battery), and Microsoft is gathering invaluable field experience here – starting now. Azure Spatial Anchors are the beginning of AR Cloud, and again – Microsoft is gathering invaluable field experience, and laying the groundwork there. Azure Kinect DK can be super useful for ambient computing, even in the homes (paired with microphones). I’ll talk about these in a future blog post – this one is already way too long.

Do you have a thought on the above? Clarification? Did I get something wrong? Let me know in the comments!

![too-much-work-not-enough-time[1].jpg](https://vbandi.net/wp-content/uploads/2018/03/too-much-work-not-enough-time1.jpg?w=1108)

![Fisher-Price-Toy-RC-Remote-Control-Thomas-The[1].jpg](https://vbandi.net/wp-content/uploads/2018/03/fisher-price-toy-rc-remote-control-thomas-the1.jpg?w=1108)

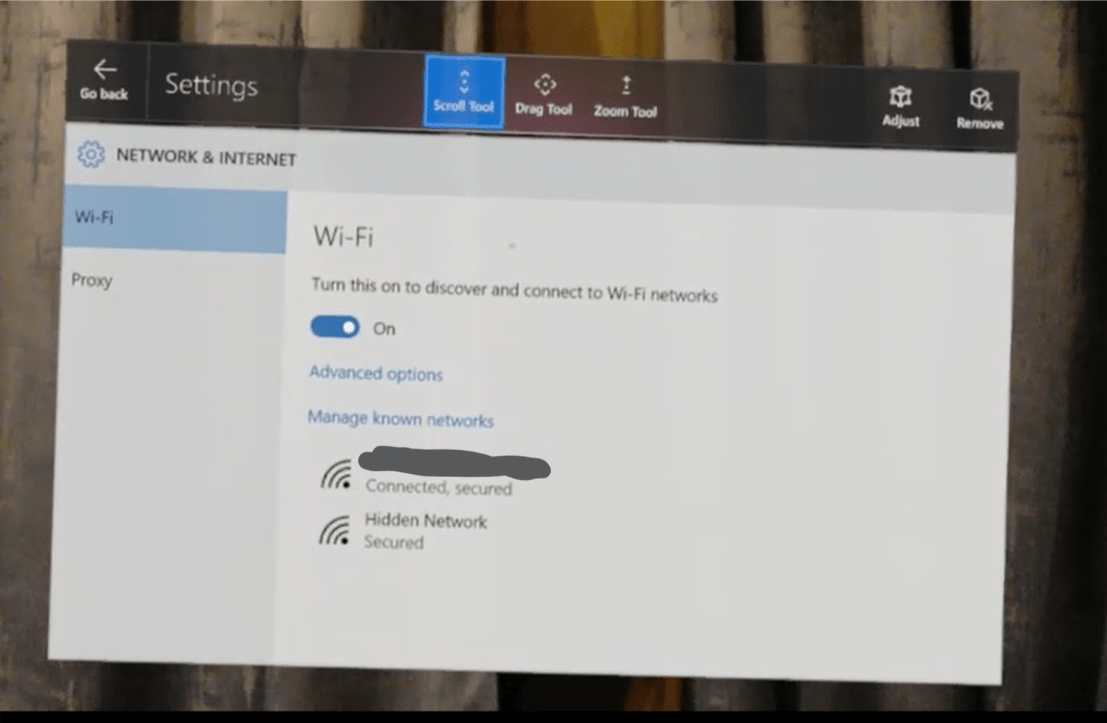

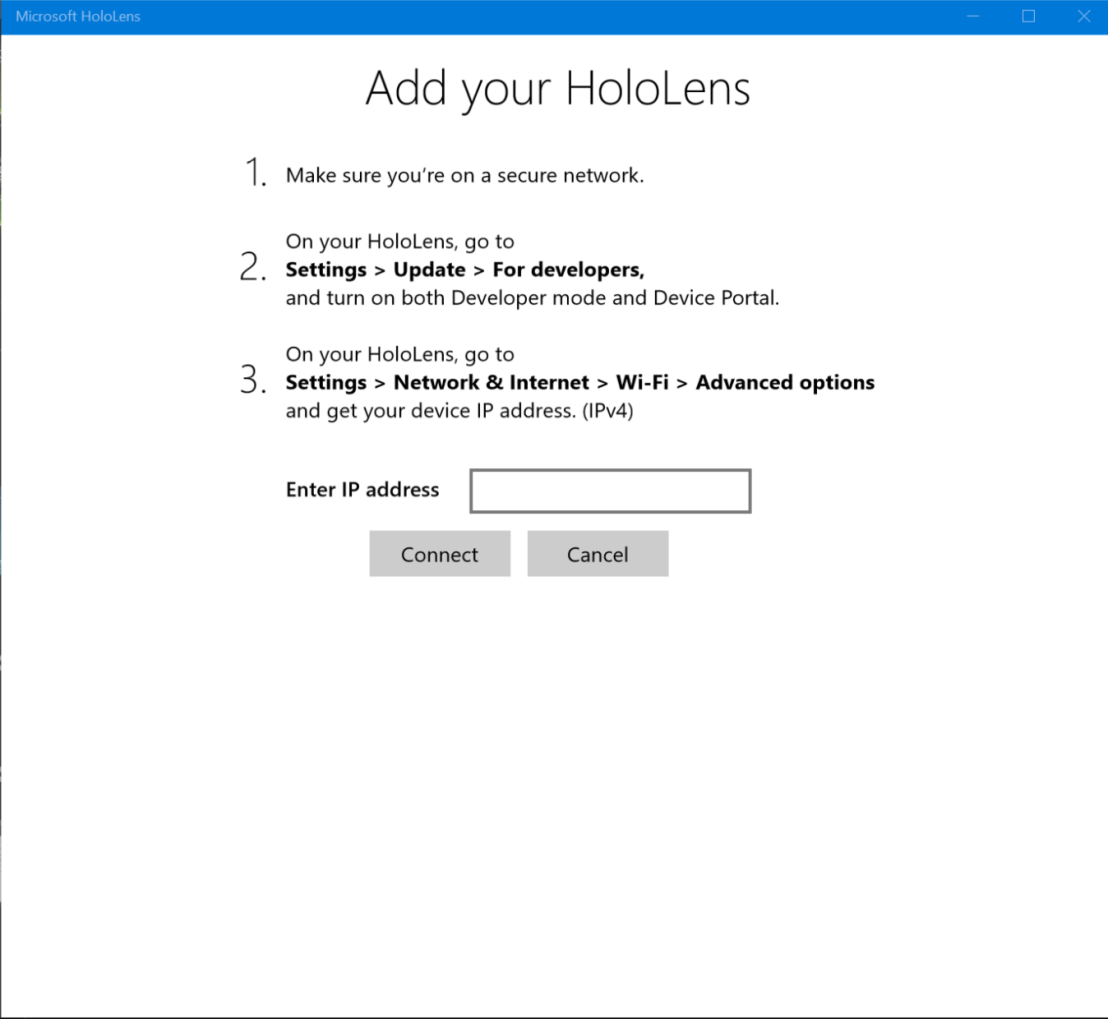

As you can see, the computer has to be connected to the Internet (and has to have a Wi-Fi adapter) for the Mobile hotspot to be enabled. If you can’t see the above warning, all is OK – turn on the “Share my Internet connection with other devices” checkbox.

As you can see, the computer has to be connected to the Internet (and has to have a Wi-Fi adapter) for the Mobile hotspot to be enabled. If you can’t see the above warning, all is OK – turn on the “Share my Internet connection with other devices” checkbox.